The 2026 Frontier Model Map: GPT-5.5, Claude 4.7, Gemini 3, and What Each Does Best

A working map of the 2026 frontier model landscape. GPT-5.5, Claude 4.7 Opus and Sonnet, Gemini 3 Pro, Llama 5, Grok 4, DeepSeek V4, and Qwen 3 graded on what they actually win at, where they leave money, ballpark pricing per million tokens, and a decision matrix for designers and builders picking models for real product stacks.

There is no best frontier model in 2026. The leaderboard fractured into specialists. GPT-5.5 wins general work. Claude 4.7 Opus wins reasoning and agent reliability. Sonnet wins prose and the speed-cost sweet spot. Gemini 3 Pro wins long context. Llama 5 wins open-weight. Grok 4 owns a real-time niche. DeepSeek destroyed the price floor. Qwen 3 owns multilingual.

This is a working map of the eight models that matter, ballpark pricing per million tokens, the four use cases and what wins each, and the four traps teams fall into when they pick by leaderboard.

The frontier split into specialists in 2026

The 2024 frame was one model getting smarter every six months. The 2026 frame is a stack of specialists, and the product teams winning right now run two or three models behind a routing layer.

Picking one model for everything is the most common 2026 mistake. The cost runs hot on the wrong workloads, and the quality drifts on the workloads where the chosen model is weak. The frontier is a routing problem, not a selection problem.

GPT-5.5, the general workhorse

GPT-5.5 is OpenAI's flagship and the default pick for general product work, the strongest all-around model when you want one API that does almost everything competently. Strong code, strong tool use, strong vision, fast latency, and the most mature ecosystem of any frontier model.

Where it leaves money. Long-form reasoning trails Claude 4.7 Opus. Long-context retrieval trails Gemini 3 Pro. Brand voice and prose taste sit below Sonnet. Pricing: around 5 dollars per million input and 15 per million output. The mid-tier of the closed field.

Claude 4.7 Opus, the reasoning and agent ceiling

Claude 4.7 Opus is Anthropic's top-tier model and the single best reasoning and agent-reliability surface shipped in 2026. The model you pick when the task has to land on the first try. Instruction-following is the cleanest in the field. Format compliance is rock solid. Tool-use stability across long agent runs is why Claude Code, Cursor agent mode, and most serious agent frameworks default to it.

Where it leaves money. Slowest of the closed flagships and the most expensive. Pricing: around 15 dollars input and 75 output per million. The right pick for the highest-stakes calls. The wrong pick for high-volume work.

Claude 4.7 Sonnet, the speed-cost sweet spot

Claude 4.7 Sonnet is the model most production teams should default to in 2026. It carries about ninety percent of Opus quality at a fraction of the cost and twice the speed. Best prose quality in the field. Best brand voice retention. Lowest drift across long conversations. The model designers reach for when the output is going to be read by a human.

Where it leaves money. Slightly weaker than Opus on the hardest reasoning and longest agent runs. Pricing: around 3 dollars input and 15 output per million. The strongest cost-quality ratio of any closed model.

Claude 4.7 Haiku, the high-throughput workhorse

Claude 4.7 Haiku is the cheap fast model in the Anthropic stack, the right pick when the volume is high and the per-call quality bar is moderate. Classification, extraction, structured tagging, fast routing decisions, lightweight chat. Strong instruction following at the cheap tier.

Where it leaves money. Not for nuanced reasoning, long-form writing, or hard agent runs. Pricing: around 1 dollar input and 5 output per million.

Gemini 3 Pro, the long-context and multimodal champion

Gemini 3 Pro is Google's flagship and the strongest model in 2026 on long-context retrieval, document grounding, and native multimodal. The two-million-token effective context window with strong needle-in-a-haystack reliability is unmatched. Native video, audio, and image input handling is the cleanest in the closed field.

Where it leaves money. Writing voice is the weakest of the flagships. Prose reads competent but flat. Brand voice work needs heavy prompting to get past the default register. Pricing: around 2.50 dollars input and 10 output per million. Strong cost ratio for the long-context win.

Llama 5, the open-weight default

Llama 5 is Meta's flagship open-weight family and the best model you can self-host in 2026. The right pick when data residency, cost control, or fine-tuning matter more than absolute quality. The 405-billion-parameter variant lands within striking distance of GPT-5.5 on most general benchmarks.

Where it leaves money. Infrastructure cost to self-host the large variant is real. Provider-hosted Llama 5 lands in the same price band as Sonnet without the prose advantage. Pricing: roughly 1 to 2 dollars per million blended on hosted providers.

Grok 4, the real-time niche pick

Grok 4 is xAI's flagship with native real-time access to the X firehose and an irreverent default voice. Useful for narrow workloads. News monitoring, sentiment tracking, real-time event analysis, and any product where the AI needs the last sixty seconds of public discourse, not yesterday's training data.

Where it leaves money. Reasoning trails Opus. Code trails GPT-5.5. The voice can be a problem in any product where personality should come from the brand. Pricing: around 5 input and 15 output per million. Same band as GPT-5.5 with a much narrower job.

DeepSeek V4 and R2, the cost destroyers

DeepSeek V4 and R2 are the open-weight reasoning pair that broke the price floor in 2026. V4 is the general model. R2 is the reasoning specialist. Top-tier reasoning quality at roughly a tenth of the closed-model cost. Hosted by DeepSeek or self-hosted from the open weights.

Where it leaves money. Slightly weaker tool-use stability than Claude 4.7. Long-context retrieval lags Gemini 3. Prose taste is below Sonnet. Pricing: around 0.30 dollars input and 1 dollar output per million. Production teams now route high-volume reasoning through DeepSeek and reserve Opus for the calls that have to be perfect.

Qwen 3, the open multilingual default

Qwen 3 is Alibaba's open-weight family and the strongest open model on multilingual workloads. The right pick when the product ships in more than English and Mandarin. Strong on Asian languages, Arabic, and the long tail of regional languages where Llama 5 starts to wobble.

Where it leaves money. English-only benchmarks land slightly behind Llama 5. The hosted-provider story is less mature outside Alibaba Cloud. Pricing similar to Llama 5 on shared providers, very cheap when self-hosted.

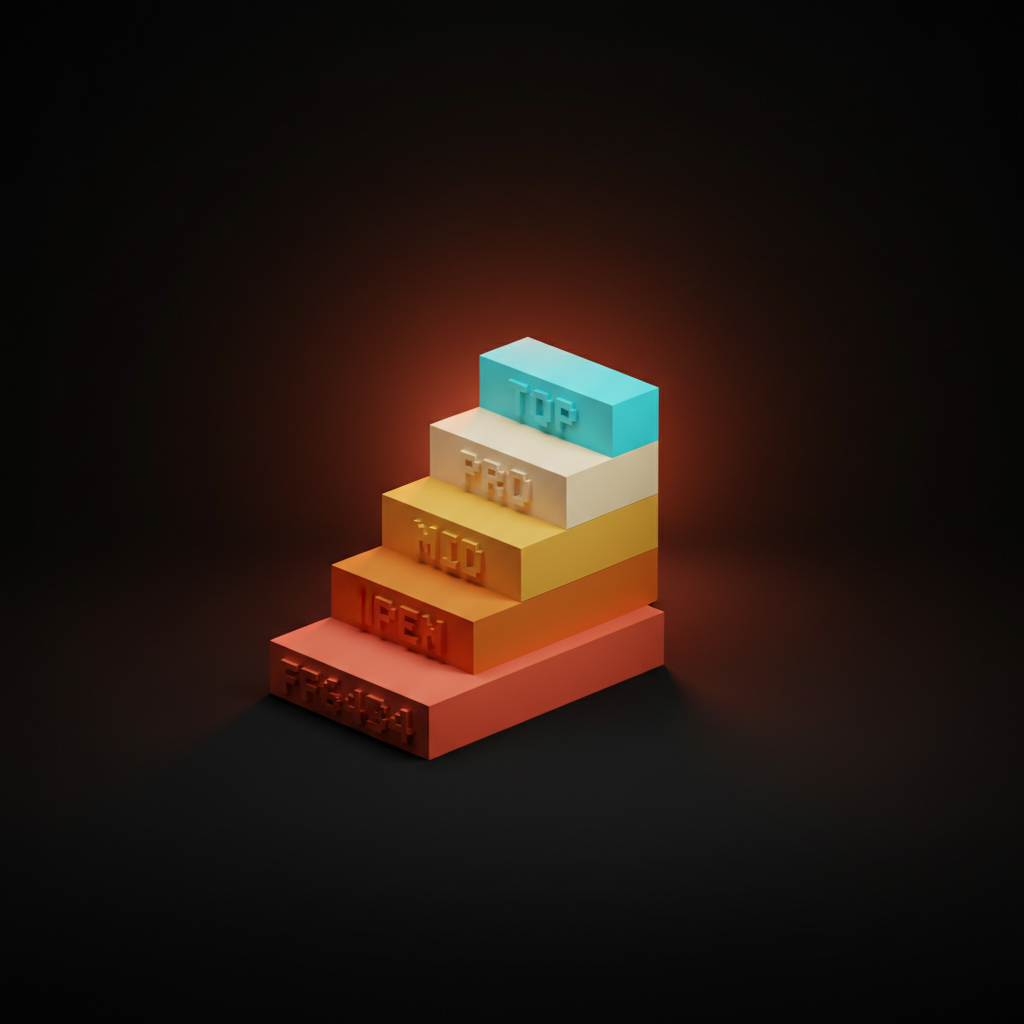

Pricing in 2026, what each million tokens actually costs

Pricing has stratified into four tiers. The cheap-per-token models are not always the cheap-per-job models when reasoning depth and rework rates enter the math.

| Model | Input ($/1M) | Output ($/1M) | Tier |

|---|---|---|---|

| Claude 4.7 Opus | 15 | 75 | Top |

| GPT-5.5 | 5 | 15 | Pro |

| Grok 4 | 5 | 15 | Pro |

| Claude 4.7 Sonnet | 3 | 15 | Pro |

| Gemini 3 Pro | 2.50 | 10 | Mid |

| Llama 5 (hosted) | 1 to 2 | 1 to 2 | Mid |

| Qwen 3 (hosted) | 1 to 2 | 1 to 2 | Mid |

| Claude 4.7 Haiku | 1 | 5 | Mid |

| DeepSeek V4 | 0.30 | 1 | Open |

| DeepSeek R2 | 0.30 | 1 | Open |

Per-job cost is what matters. A cheap model that needs three retries on a hard task is more expensive than an Opus call that lands once. Run the math on real traffic before locking the routing layer.

The four design-side use cases

Research synthesis, copy QA, image generation pipelines, and prompt-as-component are the four workloads that decide which model earns its API key. Each has a different winner.

Research synthesis, where Gemini 3 Pro wins

Research synthesis is the long-context workload, dropping ten reports into a prompt and getting a clean grounded summary. Gemini 3 Pro wins on retrieval reliability, citation quality, and effective window past one million tokens. Sonnet is a strong second at shorter horizons. The math favors Gemini once inputs cross two hundred thousand tokens. For workflows where window efficiency matters more than raw size, see context efficiency.

Copy QA, where Claude 4.7 Sonnet wins

Copy QA is brand voice review, microcopy critique, and tone consistency at scale. Sonnet has the best taste, the cleanest prose, and the lowest drift across long sessions. Pair it with a structured rubric and a brand voice Claude Skills pack and the eval pipeline runs unattended.

Image generation pipelines, where the routing matters

Image generation pipelines are not won by a single model, they are won by routing. The prompt-shaping winner in 2026 is GPT-5.5 paired with a dedicated image model on the back end. Sonnet is a strong second when brand voice has to live in the prompt. The image model itself is a separate decision and changes faster than the language layer.

Prompt-as-component, where Claude 4.7 Opus wins

Prompt-as-component is the workload where a prompt becomes a reusable production primitive, with strict format compliance, structured output, and tool use across long agent runs. Opus wins on instruction-following, format compliance, and tool-use stability. For agentic IDE work, see the AI code editor comparison. For agent UI patterns, the model under the hood is almost always Opus on the calls that have to land.

The four-use-case decision matrix

| Use case | Pick | Why |

|---|---|---|

| Research synthesis | Gemini 3 Pro | Long context, citation quality, reliable grounding past 200K tokens. |

| Copy QA | Claude 4.7 Sonnet | Best prose taste, lowest drift, strongest brand voice retention. |

| Image gen pipelines | GPT-5.5 (prompt) + dedicated image model | Best prompt-shaping with the broadest provider integrations. |

| Prompt-as-component | Claude 4.7 Opus | Best instruction-following, format compliance, tool-use stability. |

The pairings matter. Few production teams run on a single model in 2026. Most settle on two or three behind a routing layer that picks per call.

Want help picking the right frontier model for your product and standing up the routing so the cost and quality math both work? Hire Brainy. ClaudeBrainy ships Skill packs and prompt libraries that get the model layer right. AppBrainy ships full product builds for teams that want their AI to actually ship features, not demos.

Where each model lives in real product stacks

The leaderboard is one thing, the stack is another. The eight models have settled into recognizable lanes.

GPT-5.5 sits at the front of consumer chat and the default lane in any new build that wants one API. Opus sits behind the highest-stakes agent calls and prompt-as-component primitives. Sonnet sits in long-running brand and writing surfaces. Haiku sits in high-volume background jobs. Gemini 3 Pro sits in document-heavy and multimodal lanes. Llama 5 sits in regulated, data-residency-bound, and cost-controlled stacks. Grok 4 sits in real-time-news niches. DeepSeek sits in the high-volume reasoning lane where cost would have killed the project. Qwen 3 sits in multilingual and Asia-Pacific stacks.

Four traps when teams pick by benchmark

First. The leaderboard trap. A team picks the model topping a benchmark in March and it is no longer the right pick by July. Fix: pick by use-case fit and re-evaluate the routing layer every quarter.

Second. The single-model trap. A team locks one model into the entire stack and hits a wall on the workload it does not win. Fix: route by job, not by contract.

Third. The cheap-token trap. A team optimizes for input price and pays in retries, rework, and quality drift. Fix: model per-job cost before the rollout.

Fourth. The voice-mismatch trap. A team uses a flat-voice model for brand-facing copy and the work reads dead. Fix: route brand copy through Sonnet, the rest through whatever wins on cost.

FAQ

What is the best AI model in 2026?

No single best. GPT-5.5 wins general work, Claude 4.7 Opus wins reasoning and agents, Sonnet wins prose and brand voice, Gemini 3 Pro wins long context, Llama 5 wins open-weight, DeepSeek wins cost. Match the model to the use case.

Is Claude 4.7 better than GPT-5.5?

Different shapes of better. GPT-5.5 is the better default for general product work and the broadest ecosystem. Opus is better on reasoning, agent reliability, and instruction-following. Sonnet is better on prose. Most production stacks now run both behind a router.

What is the cheapest frontier model in 2026?

DeepSeek V4 and R2. Around 0.30 dollars input and 1 dollar output per million. Roughly a tenth the cost of the closed flagships at top-tier reasoning quality.

Which model has the longest context window?

Gemini 3 Pro. The two-million-token effective window with strong retrieval reliability is the field leader.

What is the best open-weight model in 2026?

Llama 5 for English-first general work. Qwen 3 for multilingual. DeepSeek V4 and R2 for reasoning at scale.

The shift the frontier map actually unlocks

The frontier in 2026 is not a single model getting smarter. It is a stack of specialists that lets a small team ship the work of a much larger one when they route by job. The teams winning are not the ones with the best model contract, they are the ones with the best routing logic.

There is no best model in 2026, only best-for-this-job, and the teams winning are the ones routing by use case instead of leaderboard.

If your team is comparing models and the conversation is stuck on which one tops the latest benchmark, the conversation is the problem. Map the workloads, pick the model that wins each, run a two-week trial on real traffic, and let the cost-quality math make the call.

If you want help picking the right frontier model and standing up the routing layer, hire Brainy. ClaudeBrainy ships Skill packs and prompt libraries that get the model layer right. AppBrainy ships full product builds for teams that want their AI to ship features, not demos.

Want help picking the right frontier model for your product and routing the stack so the cost and quality math both work? Brainy ships ClaudeBrainy as a Skill pack and prompt library that gets the model layer right, and AppBrainy ships full product builds for teams that want their AI to actually ship features, not demos.

Get Started