Brand Systems for the AI Generation Era: Designing for 10,000 Outputs

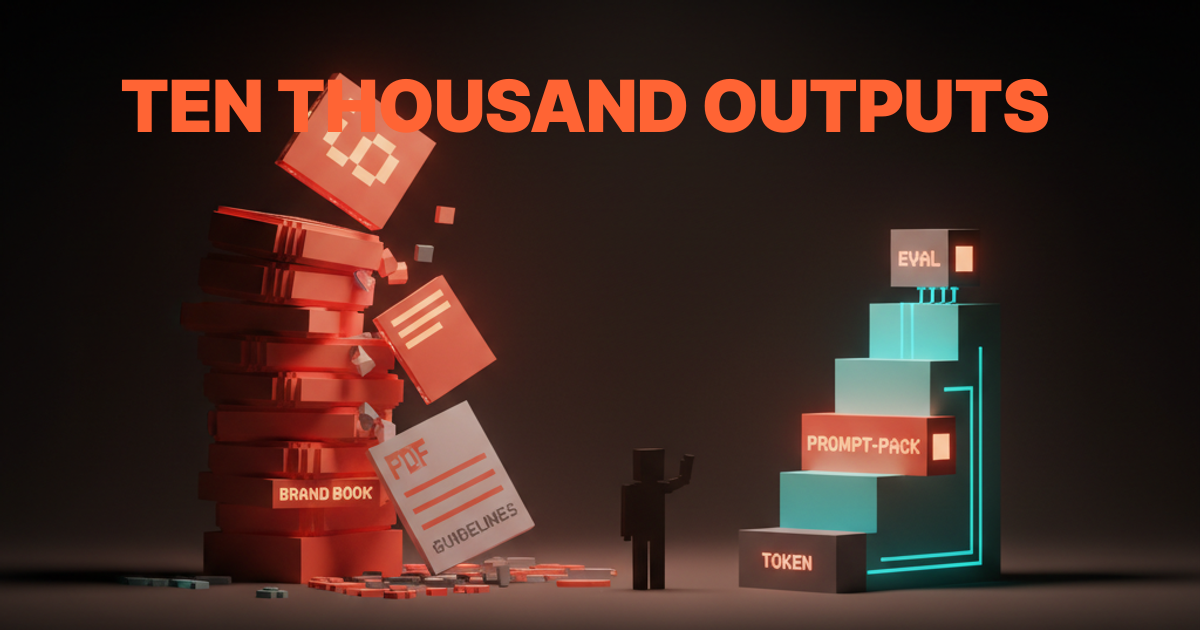

Brand systems were built for an era when humans made every asset. In 2026 an AI ships ten thousand assets a day for one brand, and the classic logo plus palette plus voice doc stack falls apart. Here is what replaces it.

Brand systems were built for a world where one designer made one asset a week. In 2026 a model produces ten thousand assets a day for a single brand, and the classic stack of logo lockup, palette swatches, type pairings, and a voice document does not survive that volume. The brand books that won awards in 2018 are the brand books leaking the most drift right now.

The break is not subtle. Klarna ran an AI ad campaign in 2024 that looked off in ways the brand team could not patch fast enough. Coca-Cola's Create Real Magic generated tens of thousands of consumer images that ranged from on-brand to alarming. Heinz used AI image generation in 2022 and got lucky. Most companies running the same playbook in 2026 are not getting lucky.

The fix is not a thicker brand book. The fix is a different shape of system. Constraints machines can read. Prompt packs that ship with the brand. Evals that measure drift in real time. A human director who edits, not draws.

The classic brand system was built for human throughput

The classic brand system is an artifact pile. Logo files, color swatches, type specimens, motif sheets, photography rules, voice adjectives, layout grids, and a long PDF that ties them together. Every part assumes a human reads it and makes a judgment call before each new asset ships.

That assumption is dead. When the AI is the operator, the artifact pile becomes a stack of unstructured opinions the model has to reconstruct on every render. The model fills the gaps with whatever the training data biases toward, and the brand drifts a little further on every output.

A brand system built for human throughput leaks at AI throughput. The classic stack is not wrong, it is incomplete. It needs a second layer the human era never required.

Voice adjectives are not a brand system

"Warm but professional." "Confident but humble." "Bold but inviting." Every brand book in the last fifteen years has a paragraph like this and every one is useless to a model. The words have no measurable target. The model produces something the writer rejects, and the only fix is for a human to redraft the line by hand, which is exactly the bottleneck the AI was supposed to remove.

A brand system that runs on adjectives is a brand system the AI cannot follow. The same shape shows up in palette descriptions ("warm earth tones"), type guidance ("modern but timeless"), photography rules ("authentic moments"), and motion principles ("playful but considered"). Every phrase was written for a human who already had taste. None were written for a system that needs a number.

The replacement is structured guidance. A voice rubric with measurable behaviors. A palette with named tokens and contrast targets. A type stack with specific weights and ratios. Photography rules with bounded categories the model can match. The system shifts from prose to spec.

The replacement is constraints, tokens, evals, prompt packs

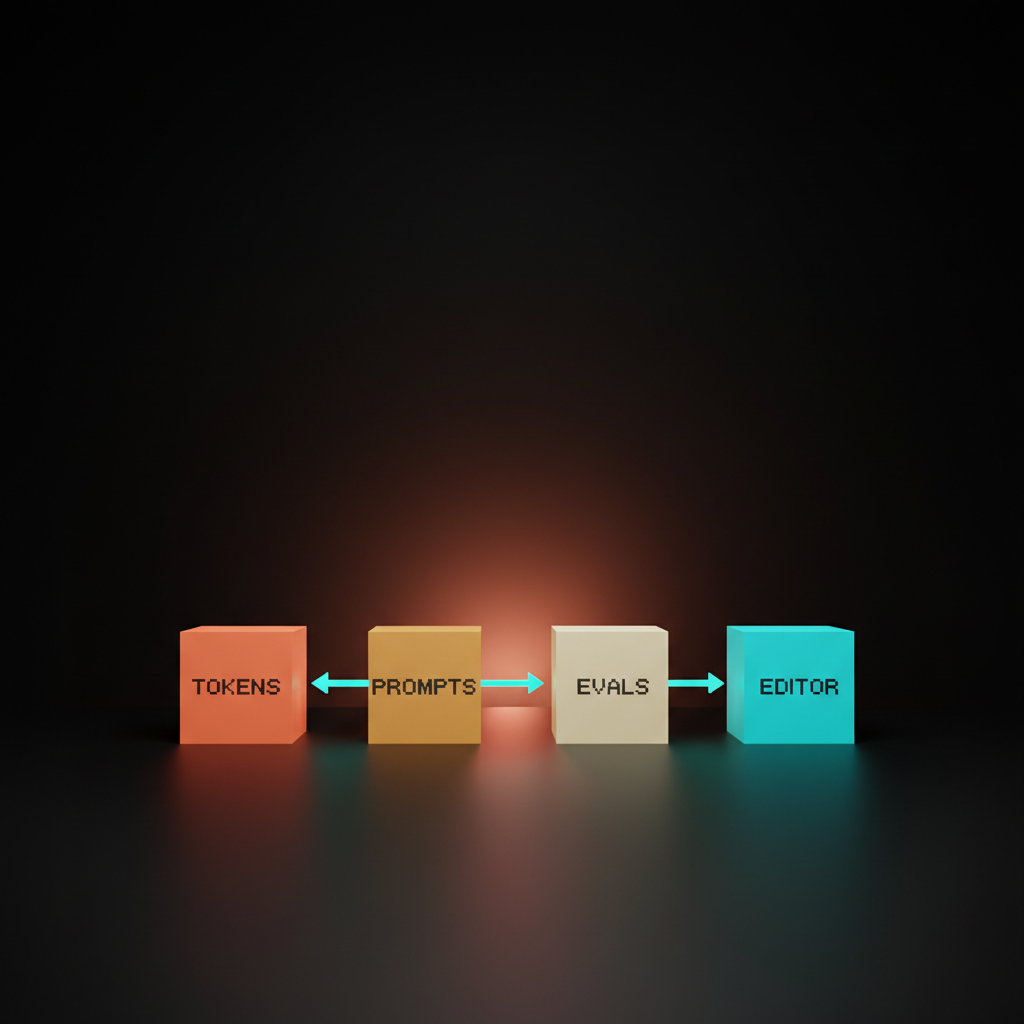

An AI-era brand system has four parts the classic stack does not.

Part one. Machine-readable tokens. The brand spec lives as structured values: color tokens, type tokens, ratio tokens, motif tokens, voice tokens that name behaviors instead of moods. The format does not matter. The AI reads them as values, not paragraphs.

Part two. Prompt packs. The model-facing version of the brand guidelines. Instructions the AI receives whenever it generates an asset, written for the model and versioned alongside the spec. Without a prompt pack the model invents its own brand on every call.

Part three. Brand evals. Automated checks that score AI output against the spec. Contrast checks, voice rubric scores, layout grammar tests, motif compliance, off-brand pattern detection. Without evals the system has no way to catch drift before it ships.

Part four. The editor. The human role shifts from designing every output to setting constraints, reviewing eval results, curating the edges, and updating the spec when reality changes. The human is the governance layer, not the production layer.

Tokens are the new brand book

A token is a structured spec the model can read. Color stops being "warm coral" and becomes a hex value with a contrast minimum and a usage rule. Type stops being "modern but readable" and becomes a font stack with weight ranges, line-height ratios, and tracking values. Voice stops being "warm but professional" and becomes a rubric: sentence length under twenty words, lead with the answer, no filler openings, no hedge phrases.

Vercel's Geist token system is the cleanest live example. The brand ships as code. Every surface across v0, the docs, the marketing site, and the AI-generated artifacts pulls from the same token graph. The brand stays consistent because the values are consistent, not because a human is policing it.

The pattern is not new. Stripe and Figma have run their brand through their design system for years. The shift in 2026 is that this pattern is now table stakes for any brand whose AI is shipping assets in volume, not a nice-to-have. See brand identity pricing for how this changes scoping conversations.

Prompt packs are part of the brand system now

A prompt pack is the model-facing version of the brand guidelines. It is the system prompt, few-shot examples, and structured constraints the AI receives whenever it generates an asset on behalf of the brand. A brand system that does not ship one is a brand system that hands the AI a blank slate.

Prompt packs are versioned, named, and scoped. There is one for social copy, one for product UI copy, one for image generation, one for video script generation, one for ad variations. Each pack pulls from the token spec and adds the model-facing instructions.

Anthropic and Linear both ship internal prompt packs that govern how their writing AI handles voice. Release notes, changelogs, and product copy read in voice across hundreds of outputs without a human rewriting every line. Companies still treating prompt design as ad-hoc are paying the cost in drift.

Brand evals turn taste into tests

A brand eval is an automated check that scores AI output against the brand spec. The eval can be a contrast checker, a voice rubric scorer, a layout grammar validator, a motif compliance scanner, or an off-brand pattern detector. The system measures itself before a human does.

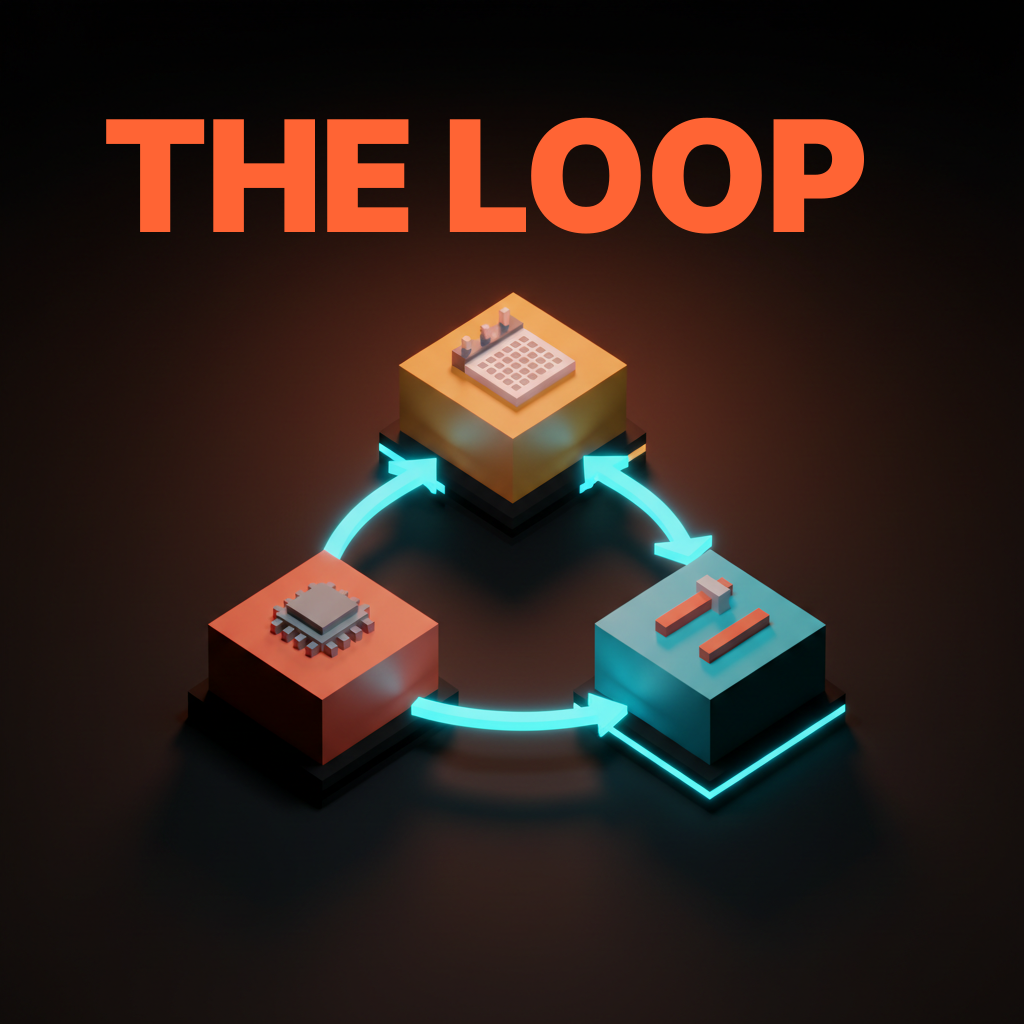

Without evals the brand drifts in a week. With evals the system catches drift on the next render and the editor corrects the spec instead of every individual asset. The loop is generate, eval, tune. Every cycle pulls the brand back to center.

The companies running serious AI-era brand systems all run brand evals. Anthropic runs voice evals on AI-generated copy. Stripe runs layout grammar evals on its docs. Vercel runs token compliance evals on every shipped surface. The brands without eval loops are the brands with the most public AI brand failures.

The brand director is now an editor, not a maker

When the AI makes the assets, the human role shifts. The brand director used to be the maker. They specified the system, then made the work, then reviewed work made by junior designers. In an AI-era brand system the director sets constraints, reviews eval results, curates the edges, and updates the spec when reality changes.

This is not a demotion. It is a higher-leverage role. A director who governs a system that ships ten thousand on-brand assets a day has more impact than a director who personally approved fifty assets a week. The trade is that the director has to be fluent in the spec format, the prompt pack, and the eval rubric, not just in taste.

The shift makes sense alongside the AI-native product design shift on the product side. The maker rung shrinks. The editor rung grows. The system runs the throughput.

How Vercel, Linear, Stripe, Figma, and Anthropic already operate

The companies running serious AI-era brand systems today are not agencies. They are product companies who treated the brand like a design systems guide artifact years ago, then layered prompt packs and evals on top as the AI tooling matured.

The pattern is consistent. Vercel ships Geist as the token graph behind v0 and every brand surface. Linear's voice doc reads like a writing rubric, not a vibe statement, and the team uses it as the system prompt for AI-assisted writing. Stripe and Figma both treat the design system as the brand system, which is why AI-generated assets at either company drift less than at agencies four times their size. Anthropic runs voice evals on AI copy across product surfaces, which is how their writing stays in voice even as volume scales.

The shape is the same in all five. Brand spec lives as code. Prompt packs ship alongside it. Evals run on every release. The brand director is on the same team as the design system lead, often the same person. If you want help getting your brand system to this shape, hire Brainy. BrandBrainy ships brand operating systems built for AI throughput.

The cautionary tales of thin systems running AI volume

For every brand running a real AI-era system there are three brands running AI assets through a thin system and watching the public results.

Klarna ran AI-generated marketing visuals in 2024 that read inconsistently across regions, with off-brand color casts and proportions that did not match the existing identity. The brand team did not have a token graph the AI could lock to. The drift was visible and the team patched it manually.

Coca-Cola's Create Real Magic gave consumers an AI image generator scoped loosely to the Coke brand. Tens of thousands of outputs landed in public, and a chunk of them looked nothing like Coca-Cola. The system had a logo lockup constraint and not much else. The brand was protected on the legal layer and exposed on the visual layer.

Heinz ran the A.I. Ketchup campaign in 2022 with DALL-E 2 and got lucky. The model trained on enough Heinz imagery that the outputs reliably looked like Heinz. The campaign worked because the brand identity was so locked-in over a century that the AI defaulted to it. Most brands do not have that training data advantage. Running the same playbook in 2026 without a real spec is gambling.

The lesson is the same across all three. A brand system that ran fine for human-paced output cannot govern AI-paced output without the new layer underneath it. The same logic applies upstream of identity work, including brand naming, where the spec has to anchor the model.

The AI-readiness checklist for brand systems

Run this on your brand and you will know in twenty minutes whether the system is AI-ready.

One. Token coverage. Are color, type, spacing, motion, motif, and voice all expressed as machine-readable values, not paragraphs? If any still live as adjectives, the system fails on that axis.

Two. Prompt pack inventory. Does the brand ship prompt packs for the AI tools the team uses, scoped to the surfaces those tools cover? If the team writes prompts ad-hoc, the brand drifts on every call.

Three. Eval rubric. Is there at least one automated check that scores AI output against the spec, and does it run before output ships? A brand without evals has no measurement loop.

Four. Editor cadence. Is there a human reviewing the eval results on a regular cadence, updating the spec, and curating the edges? Without an editor the system runs open-loop.

Five. Drift monitoring. Is the team watching for off-brand patterns in shipped output and tuning the spec or the prompt pack when patterns appear? A static system rots.

Fail on three or more and you are running a 2018 system in a 2026 environment. The cost is invisible until AI volume scales, and then the drift is everywhere.

FAQ

What is an AI brand system?

A brand operating system designed for AI-paced output. Four parts: machine-readable tokens the AI consumes as values, prompt packs that ship as the model-facing version of the guidelines, brand evals that automatically score output against the spec, and a human editor who governs the system. It replaces the artifact-pile brand book with a running OS.

How is this different from a normal brand system?

A normal brand system is a stack of artifacts (logo files, swatches, type specs, voice doc) designed for humans to read before making each asset. An AI brand system adds the structured layer the AI needs (tokens, prompts, evals, editor) so the brand stays consistent at volume without a human approving every output.

What are brand evals?

Automated checks that score AI-generated output against the brand spec. They include contrast checks, voice rubric scoring, layout grammar tests, motif compliance, and off-brand pattern detection. They turn brand taste into measurable tests, which is the only way to govern thousands of AI outputs a day.

Do I still need a logo and a palette?

Yes. The classic stack is the input layer. The shift is that logo, palette, type, and voice all need to be expressed as machine-readable tokens with measurable behaviors, and the system needs prompt packs and evals layered on top.

Which companies are doing this well in 2026?

Vercel (Geist token system), Linear (structured voice rubric and prompt packs), Stripe and Figma (design systems that doubled as brand systems), and Anthropic (voice evals across AI-generated copy). Product companies treating brand as code, not agencies treating brand as artifact stacks.

What this means for brand teams in 2026

Brand systems are no longer artifact stacks. They are operating systems for AI generation, and the brands that get the OS right will run circles around the brands still shipping a 60-page PDF. The shift is from documents to specs, adjectives to rubrics, approval queues to eval loops, makers to editors.

If you want a brand system that survives AI throughput, hire Brainy. BrandBrainy ships brand operating systems built for ten thousand outputs a day. Token graph, prompt packs, eval rubric, editor cadence. The four parts that turn a brand from a PDF into an OS. The volume is here. The system has to match it.

If you want a brand system that survives AI throughput, BrandBrainy ships brand operating systems built for ten thousand outputs a day. Tokens, prompt packs, evals, and the editor workflow that holds it all together.

Get Started