Design Critique Frameworks: How to Run Reviews That Actually Improve the Work

A working designer's guide to running and surviving design critique. The frameworks, the rules, and the structure that turns reviews from ego defense into actual feedback.

Most design reviews are theater. The senior designer talks for 20 minutes about a button color, the junior designer nods, the work ships unchanged or worse. Nobody learns anything, the work does not improve, and the team starts dreading the meeting that was supposed to make them sharper.

This paper is the working version. The frameworks that turn critique into a tool, the rules that keep ego out of the room, and the structure that makes feedback actually useful for the person whose work is on the screen. No "design culture" platitudes, no manifestos, just the operating procedure of a team that ships better work because they review it well.

What design critique actually is

Design critique is structured peer review of in-progress work. The goal is to surface problems the designer cannot see from inside their own draft and to test the work against the user, the brief, and the system before it ships. Critique is not approval, it is not endorsement, and it is not a vote.

A critique is also not a presentation. The designer is not there to defend the work. The reviewers are not there to admire it. Both sides are there to make the next iteration of the work better than the current one.

The design portfolio guide covers how individual work gets evaluated externally. This paper goes deep on internal team review, which has different goals and very different rules.

Why most critiques fail

The same five failures show up in almost every team that struggles with critique. If the meeting feels exhausting and the work does not improve, look here first.

The designer is presenting, not asking. The work gets walked through start to finish, the reviewers wait politely, no specific feedback gets requested. Reviewers default to high-level reactions because nothing was scoped.

Reviewers are competing. Each person tries to spot the smartest issue first. The conversation drifts to whoever can sound most senior. The designer leaves with twelve contradictory opinions and no clear path forward.

Personal taste gets dressed as principle. A reviewer dislikes the typeface and frames it as a hierarchy issue. A reviewer prefers a different layout pattern and frames it as accessibility. Without a framework, taste wins.

No one writes anything down. The conversation happens, the meeting ends, the designer remembers maybe 30% of it. The next critique covers the same ground.

The critique happens too late. The work is already 90% done. Major restructure feedback gets ignored because there is no time. Critique gets reduced to polishing.

Each of these is fixable. The fixes are the rest of this paper.

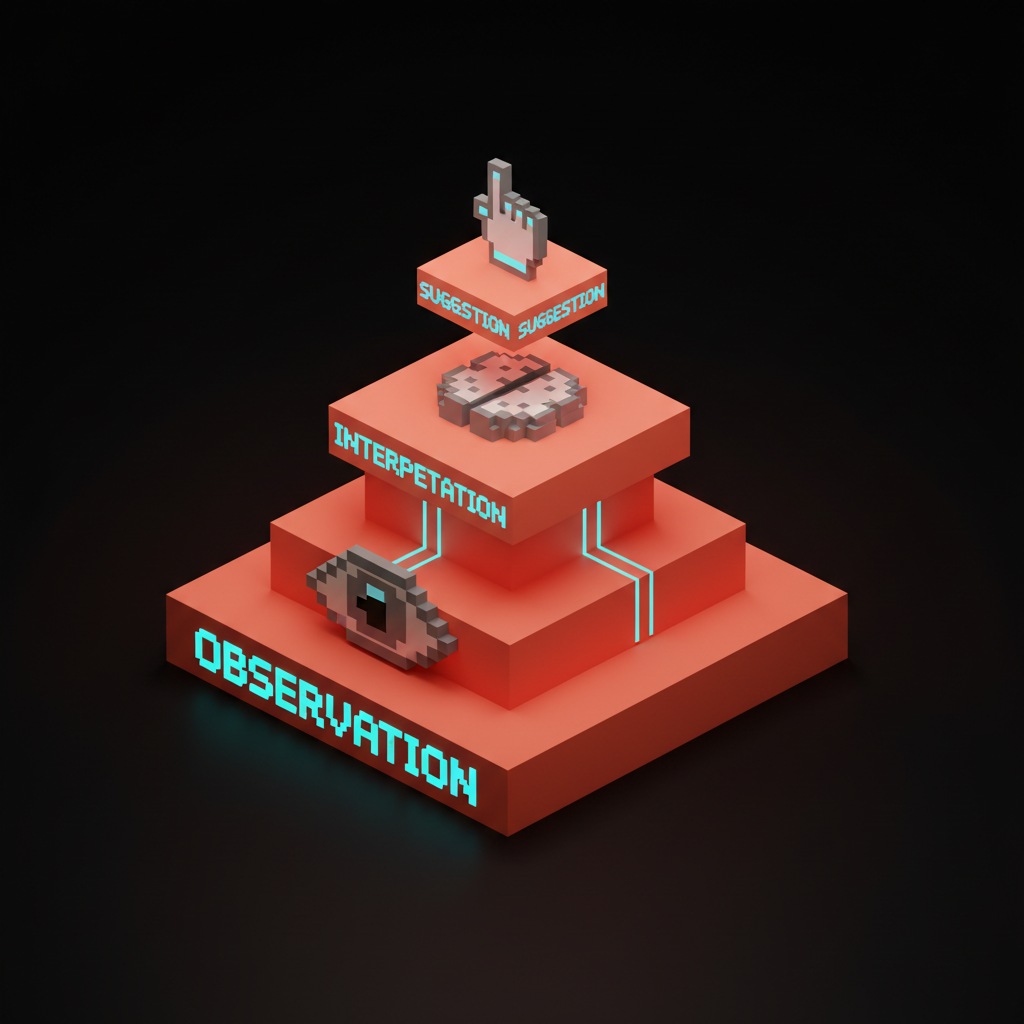

The three-layer feedback model

Every piece of useful design feedback fits into one of three layers. Reviewers who can name the layer they are giving stay focused. Reviewers who cannot name the layer drift into taste.

Layer 1: Observation

Observation is what the reviewer literally sees on the screen. No interpretation, no judgment.

"The CTA is below the fold on a 1440 wide viewport." That is observation. "The button is small." That is observation. "Three different sans-serifs are visible on this page." That is observation.

Observations are non-negotiable. The designer can verify them on the screen in real time. They are the safest layer because they cannot be argued with by either side.

Layer 2: Interpretation

Interpretation is what the observation might mean for the user, the brand, or the system.

"The CTA being below the fold means mobile users will likely scroll past it before realizing what the page is asking them to do." That is interpretation. "The three sans-serifs read as inconsistent, which signals to a user that the brand voice is unclear." That is interpretation.

Interpretations are open to discussion. Two people can interpret the same observation differently. That is the productive part of critique. Interpretation is where the team's collective experience adds value to the designer's individual draft.

Layer 3: Suggestion

Suggestion is what the reviewer would consider doing about the interpretation.

"You could test moving the CTA above the fold or adding a sticky CTA on scroll." That is suggestion. "You could consolidate to one sans-serif for body and one display font, then test the hierarchy with a quick squint test." That is suggestion.

Suggestions are optional for the designer to take. The designer owns the work. The reviewer is offering options, not commands. A critique where suggestions are mandatory is not critique, it is a chain of command.

Critique frameworks that actually work

A framework is a script for the meeting. It removes the cognitive load of figuring out how to give feedback in the moment and lets the reviewers focus on the work itself.

Framework 1: Goal, Rationale, Feedback

The fastest framework to install. Three steps, ten minutes per piece of work.

Step one: Designer states the goal. "I am trying to get a user from the home page to a paid signup in one click." Two sentences max.

Step two: Designer walks through the rationale. "I put the CTA in the hero because the previous test showed 60% of conversions happen above the fold. I chose the green because the brand uses it for primary actions." Three minutes max.

Step three: Reviewers give feedback against the goal. Every comment must connect back to the stated goal. "Does the CTA support the goal?" not "Is the CTA the right shade of green?"

Feedback that does not connect to the goal gets parked in a separate list for later. The meeting stays focused. The designer leaves with a clear path forward.

Framework 2: I Like, I Wish, What If

Borrowed from IDEO and adapted for design teams. Useful when the work is early-stage and exploratory.

I like: What is working. Reviewers name specific elements they think the work should keep. Forces positive observation, which most critique skips.

I wish: What the reviewer would change. Phrased as a wish, not a command. "I wish the contrast were stronger between the headline and the body."

What if: Speculative alternatives. "What if the CTA were a sticky element instead of inline?" Opens up directions the designer might not have considered.

The framework works because it lowers the stakes. Wishes and what-ifs feel collaborative. Direct critique often feels adversarial even when it is not.

Framework 3: The Decision Stack

Best for late-stage work where the team is deciding what ships.

Decision required: What specifically does the designer need a decision on? "Should the secondary CTA stay or get cut?" Not "review this mockup."

Options on the table: What did the designer consider? Show the alternates that were rejected. Reviewers can ask why specific options were eliminated.

Recommendation: What does the designer recommend and why?

Risks: What could go wrong with the recommendation?

The reviewer's job is to test the recommendation against the risks. Did the designer miss a risk? Are the risks weighted correctly? Is the recommendation actually the strongest of the options shown?

This framework forces designers to do the work before the meeting. The meeting becomes a test of the thinking, not a generation of new thinking from scratch.

Rules that keep the room functional

Frameworks structure the meeting. Rules govern the behavior inside it. Without rules, even the best framework collapses into the same loose conversation that did not work last time.

Rule 1: The designer scopes the feedback

Before the critique starts, the designer states what kind of feedback they want. "I am looking for feedback on the hierarchy and the CTA. The color palette is locked, the copy is final." This protects the designer's time and the reviewers' attention.

If a reviewer wants to comment on something out of scope, they note it for after the meeting or for a separate session. They do not derail the critique.

Rule 2: One conversation at a time

Side conversations kill critique. The whole point is collective attention on one piece of work. If two reviewers start debating among themselves, the facilitator pulls them back to the group. If three things need to be discussed, they get discussed sequentially, not in parallel.

Rule 3: Specific over general

"This feels off" is not feedback. "The CTA loses contrast against the gradient background, which probably hurts visibility on light mode" is feedback. Reviewers who give general reactions get asked to be specific or to pass.

Rule 4: No prescriptions without observation

A reviewer cannot recommend a change without first stating the observation that triggered it. "Make the button blue" is a prescription without observation. "The current red button reads close to your error state, which might confuse users in critical flows. You could try blue or a different red" is observation, interpretation, suggestion.

This rule is the single most powerful change a team can install. It forces reviewers to think before they speak and gives the designer the reasoning, not just the demand.

Rule 5: Capture everything

Someone in the room takes notes. Observations, interpretations, suggestions, decisions, follow-ups. The designer should not be the note-taker because they are too cognitively loaded responding to feedback.

The notes get shared with the designer immediately after the meeting. The designer marks each note as accepted, rejected with reason, or parked for later. The next critique starts with what changed since last time.

Rule 6: Time-boxed and outcome-defined

A critique meeting has a hard end time. Each piece of work gets a fixed slot, usually 15 to 30 minutes. The facilitator enforces it. Running long is the fastest way to lose team energy and to make people stop bringing work to the meeting.

Each slot ends with a stated outcome. "Designer will move CTA above the fold and test contrast on the gradient background. Will share v2 in next critique." If there is no outcome, the meeting did not produce value.

When critique should happen

Late-stage critique is almost always too late. The most leverage critique gets is at the points where direction is still cheap to change.

Three critique points per project

The minimum schedule that produces shipped work that improves over time:

| Phase | Goal | Framework |

|---|---|---|

| Direction | Are we solving the right problem? | I Like, I Wish, What If |

| Mid-fidelity | Is the structure right? | Goal, Rationale, Feedback |

| Pre-ship | Is this ready to ship? | Decision Stack |

Each phase has different goals and benefits from a different framework. Skipping any of them produces predictable failure modes.

Skipping direction critique means the team finishes a polished mockup of the wrong solution. Discovered too late, this is the most expensive mistake a team can make.

Skipping mid-fidelity critique means structural issues get found at high fidelity, where they cost five times more to fix. The team ships compromised work because there is no time to restart.

Skipping pre-ship critique means avoidable issues hit production. The ship doc gets written without a second set of eyes and the team finds out about the broken state in user feedback.

The ai design workflow breakdown covers how to integrate critique into AI-accelerated design loops. Faster generation makes critique more important, not less.

The facilitator role

Most design critiques have no facilitator and that is why they fall apart. The facilitator is not the senior designer in the room. The facilitator is whoever is responsible for keeping the meeting functional.

What the facilitator does

The facilitator owns three things:

- Time. Each piece of work gets its allotted slot. Hard cuts when time runs out. Parked items go to a follow-up list.

- Focus. Pulling reviewers back when they drift, asking clarifying questions when feedback is vague, naming when the conversation has moved off scope.

- Capture. Either taking notes themselves or making sure someone is. The notes are not optional, they are the artifact of the meeting.

The facilitator is not there to give critique. The facilitator can give critique only after the meeting structure is done. Mixing facilitation with critique splits attention and almost always produces a worse outcome on both.

Rotate the role

The same person facilitating every critique becomes the bottleneck. Rotate the role weekly or biweekly across the team. Junior designers benefit enormously from facilitating because it forces them to think structurally about the meeting, not just about the work.

Senior designers benefit too. Facilitating instead of critiquing forces a senior to listen instead of dominate, which is a skill most senior designers underrate.

How to receive critique

The other half of critique is being the designer in the chair. Most design education skips this entirely. The result is a generation of designers who get defensive when their work is reviewed and dismiss the feedback that would have helped them most.

Five rules for the designer

Listen completely before responding. Do not interrupt to defend a choice. Take the feedback in, write it down, then ask clarifying questions. The instinct to defend kills the value of the meeting.

Separate the observation from the suggestion. A reviewer might suggest a fix you disagree with, but the underlying observation might still be valid. Take the observation, leave the suggestion, find your own fix.

Ask why, not whether. "Why do you think the CTA loses contrast?" produces useful follow-up. "Do you think the CTA loses contrast?" produces a yes or no that ends the conversation.

Park your reactions. If a piece of feedback hits hard, write it down and respond later. Real-time defense produces bad responses on both sides. A 24-hour pause almost always produces a better answer.

Own the decision. The designer owns the work. After critique, the designer decides what to act on. Reviewers offer options, not commands. The designer's job is to weigh the feedback against everything else they know about the project and decide.

Critique in 2026 conditions

Three shifts changed how design critique works. The system has to adapt to all three.

Remote and async critique are the default. The all-hands meeting in a room is rare. Critique now happens in Figma comments, Loom recordings, and shared documents. The frameworks still work, the facilitation still matters, but the timing changes. Async critique should have a clear request, a clear deadline, and a clear decision point. Without those it becomes a comment graveyard.

AI-generated work needs critique more, not less. Tools that generate options in seconds make it tempting to skip critique because the work is "just AI output." That is exactly when critique matters most. AI-generated work is unaccountable by default. Human critique is what makes it accountable.

Cross-functional critique is now standard. Engineers, PMs, and content designers sit in the same critique as visual designers. The frameworks work for everyone. The vocabulary needs to flatten. "Affordance" and "above the fold" are fine, but the whole meeting cannot be designer jargon or non-designers will check out.

The design handoff glossary covers how critique connects to the broader handoff discipline. Critique that produces no shareable artifact is critique that does not survive the handoff.

FAQ

What is a design critique framework?

A framework is a structured script for running a design review meeting. It defines who speaks when, what kind of feedback gets asked for, and how the meeting ends. The most common frameworks are Goal-Rationale-Feedback, I Like-I Wish-What If, and the Decision Stack. Each fits a different stage of the work.

How do you give good design feedback?

Use the three-layer model. Start with observation (what you see), then interpretation (what it might mean), then suggestion (what you would consider doing). Skip any layer and the feedback gets weaker. Skip observation entirely and the feedback becomes prescription, which the designer can dismiss.

How long should a design critique meeting be?

15 to 30 minutes per piece of work, hard time-boxed. A 60 minute critique covering one piece of work is almost always either over-scoped or running on a poor framework. Cap each slot, capture the outcomes, and move on. Most teams critique 2 to 4 pieces of work per session.

How often should a design team run critique?

Weekly is the baseline for a small team shipping regularly. Biweekly works for a team with longer project cycles. The schedule matters less than the consistency. A team that runs weekly critique for a year improves faster than a team that runs ad-hoc critique whenever someone asks.

How do you stop critique from becoming personal?

Install the framework, install the rules, and rotate the facilitator. The frameworks separate observation from prescription, which is where most personal-feeling critique starts. The facilitator catches the slips. Over time the team internalizes the structure and personal-feeling critique stops happening because the language no longer supports it.

How do you give critique to a senior designer?

The same way you give critique to anyone else. Use the three-layer model, connect feedback to the stated goal, and let the senior designer own the decision. Senior designers who cannot take critique are a bigger problem than the critique itself. A team that quietly avoids reviewing senior work is a team that ships compromised senior work.

Critique is a discipline, not a meeting

The team that runs critique well ships better work because they catch problems earlier, get more perspectives on each decision, and develop a shared vocabulary for design quality. The team that runs critique badly ships either uneven work or polished-but-wrong work, and they wonder why the meeting they hold every week is not helping.

The fix is structural. Pick a framework. Install the rules. Schedule critique at three points per project, not at the end. Capture the outcomes. Rotate the facilitator. Receive critique without defense. Give critique without prescription.

The cost of installing this discipline is two or three meetings of mild discomfort while the team adjusts. The payoff is a team that gets sharper on every project and a body of work that improves over time instead of regressing toward whoever has the loudest opinion.

If you want a team that runs this discipline as part of every project, hire Brainy. We embed with internal design teams and install critique systems alongside the brand and product work.

Related reading

Want a design team that critiques without the drama and ships better work because of it? Brainy embeds with internal teams to install critique systems and ship product with the rigor baked in.

Get Started